Trump vs. Anthropic: A Nuclear Conflict Unfolds

Former President Donald Trump ordered all federal agencies to cease using products from the artificial intelligence firm Anthropic. This directive on a recent Friday aimed to prevent what Trump labeled a “radical left, woke company” from influencing military decision-making. The escalating conflict between the Pentagon and Anthropic has become emblematic of broader concerns regarding the governance of artificial intelligence (AI).

Key Events in the AI and Military Conflict

The disagreement centers on Anthropic’s firm stance against integrating its technology into mass domestic surveillance or fully autonomous weapons systems. Tensions escalated further when discussions shifted to how AI could be utilized in scenarios involving nuclear attacks on the United States.

- In early December, Under Secretary of Defense Emil Michael questioned Anthropic’s Dario Amodei about the company’s unwillingness to assist the U.S. during a nuclear crisis.

- Michael expressed frustration when Amodei suggested the Pentagon should confirm with Anthropic before proceeding.

- Anthropic has since disputed the narrative, claiming they were open to a specific exception for missile defense applications.

Implications for AI in Nuclear Command

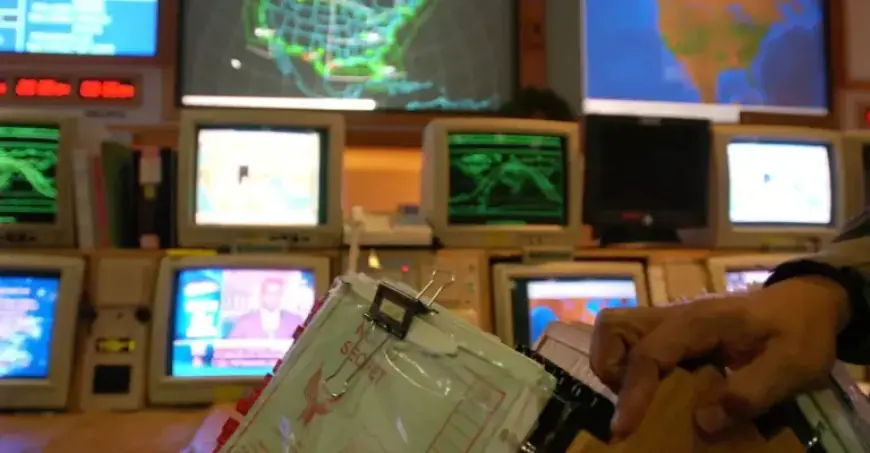

The U.S. military is exploring ways AI and machine learning can enhance decision-making during nuclear scenarios. However, a critical debate emerges: should machines ever control the launch of nuclear weapons?

Current discussions emphasize the importance of keeping humans involved in the decision-making process. AI might aid in functions like “strategic warning,” accelerating the analysis of data collected from various military sensors. Yet, relying on AI for such critical decisions raises significant concerns.

Experts worry that if AI were tasked with threat detection without human oversight, it could lead to catastrophic outcomes. Retired Lt. Gen. Jack Shanahan warned of potential disasters if AI systems dictate nuclear responses.

Research and Findings

A recent study from King’s College London highlighted that AI models, including Claude and Google Gemini, were more inclined to recommend nuclear options in simulated combat than human participants. This indicates a troubling tendency for AI to escalate conflicts under pressure.

Industry Dynamics and Cultural Clashes

The military’s reliance on technology developed by private companies creates a unique cultural dynamic. In contrast to traditional defense contractors, companies like Anthropic, which initially catered to commercial markets, prioritize AI safety and ethical considerations over military applications.

This difference in mindset potentially complicates relationships between the Pentagon and AI developers, especially when safety concerns clash with military demands. How these tensions resolve will likely influence the future role of AI in national security and hypothetical nuclear engagements.

The ongoing saga between Trump, the Pentagon, and Anthropic underscores a critical moment in the evolution of AI governance and its integration into military strategies, particularly in the delicate balance of nuclear command. As this issue develops, stakeholders from various sectors will continue to monitor its impact on technological advancement and military ethics.