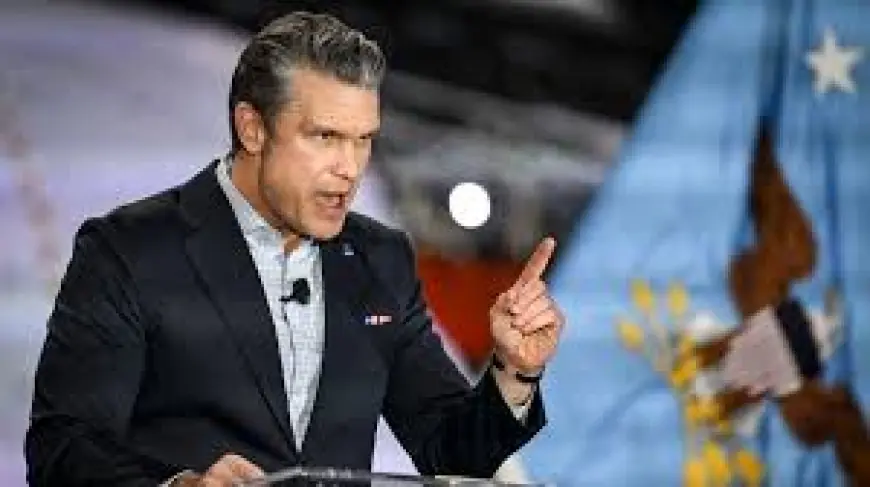

What Is Anthropic and Why the Pentagon Standoff Raises Immediate Uncertainty for Military AI Policy

The question what is anthropic has moved from technical curiosity to strategic risk as headlines link a Pentagon standoff with moves to blacklist Anthropic and simultaneous efforts to de-escalate. This matters now because those parallel developments create a policy limbo: defensive planners, AI developers, and corporate leaders will feel the effects first while broader rules for military use of artificial intelligence hang in the balance.

What Is Anthropic — why the standoff highlights rapid uncertainty and limited guardrails

At the heart of the debate: uncertainty about how AI tools might be governed, procured, or restricted when national security priorities collide with commercial innovation. The recent press cycle pairs three clear threads — a Pentagon standoff framed as decisive for AI in war, a political push to blacklist Anthropic, and an active move from a prominent industry leader aiming to calm tensions while employees publicly back Anthropic — and that combination creates open-ended risks for policy and procurement.

Here’s the part that matters: when political moves, defense posture, and corporate diplomacy all happen at once, the result is not a single policy outcome but a field of potential disruptions. Companies that build advanced AI systems face unclear rules about whether their technology will be available to military users, barred by political action, or subject to rapid regulatory change. That uncertainty can slow research, shift investment, or push development offshore.

- Immediate implication: procurement and partnership choices by defense agencies could stall while legal and political options are considered.

- Groups affected early: AI engineers, employees who publicly back peers, corporate leaders navigating reputational and operational risk, and defense planners seeking reliable suppliers.

- Financial and strategic signals to watch: corporate statements and workforce reactions that indicate whether companies will resist or accommodate political restrictions.

- Operational effect: capacity to integrate advanced models into defense systems may be delayed or fragmented if blacklisting or restrictions expand.

It’s easy to overlook, but the interplay between political actions and industry responses often sets longer-term norms: a temporary blacklist or a public show of support can have outsized influence on how contractors and allies choose partners going forward.

Event details sketched from recent headlines

Recent headlines present three linked developments: a Pentagon standoff described as decisive for the future use of AI in war; a political move to blacklist Anthropic tied to an AI dispute with the Pentagon; and a separate effort by a major AI leader to help de-escalate tensions while employees in that company voice support for Anthropic. Those are the explicit elements surfaced across the coverage driving today’s policy uncertainty.

The real question now is whether these strands converge into a stable framework or produce episodic restriction cycles. If blacklisting gains traction, firms may re-evaluate work with defense customers or accelerate alternative partnerships. If de-escalation succeeds, informal norms could emerge that keep access open while limiting certain applications. Either path leaves unanswered how long ambiguity will persist and which rules will become binding.

Key indicators that would clarify the next phase include formal policy proposals, legislative action addressing AI defense use, and shifts in corporate conduct such as hiring freezes or public campaigns. Those signs would signal whether the standoff resolves into a predictable regime or into recurring clashes between political actors and AI firms.

What's easy to miss is that this moment is less about any single company and more about how political pressure, defense requirements, and workforce activism interact to shape the new practical limits on AI deployment in sensitive contexts.

For readers tracking the debate: expect more public posturing before durable rules emerge, and watch for concrete moves that translate headlines into policy or commercial change. Recent updates indicate developments may continue to unfold; details could evolve.