Anthropic AI Draws Washington Focus as Pete Hegseth Highlights CORE Security Initiative

Anthropic AI moved to the center of U.S. defense and technology policy discussions this week after Defense Secretary Pete Hegseth referenced a new “CORE” security framework aimed at strengthening artificial intelligence systems used in national defense. The comments come amid intensifying competition between U.S. and Chinese AI developers and growing concern across the United States, United Kingdom, Canada, and Australia about how advanced AI tools are deployed safely in government and military environments.

Anthropic AI Expands Role in U.S. Government Discussions

Anthropic, the San Francisco–based artificial intelligence company behind the Claude family of large language models, has positioned itself as a safety-focused AI developer. Founded by former OpenAI researchers, the company emphasizes alignment, constitutional AI principles, and controlled deployment of powerful generative systems.

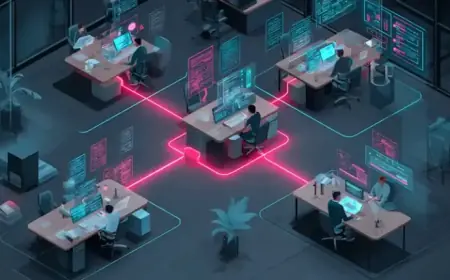

In recent months, Anthropic AI has increased engagement with U.S. federal agencies, particularly around cybersecurity, language analysis, and decision-support systems. Policymakers view frontier AI models as dual-use technologies—capable of driving economic productivity while also introducing risks if misused or inadequately secured.

The mention of Anthropic during defense briefings underscores how quickly private-sector AI firms are becoming central to national security conversations.

Pete Hegseth Links AI to CORE Defense Strategy

Pete Hegseth, serving as U.S. Secretary of Defense, referenced a framework described as CORE during remarks about strengthening digital infrastructure and AI safeguards inside the Pentagon. CORE is being framed as a principles-based structure intended to guide AI acquisition, deployment, and monitoring in sensitive military contexts.

While detailed technical specifications have not been publicly released, defense officials described CORE as emphasizing:

-

Cyber resilience against adversarial attacks

-

Operational reliability of AI systems

-

Responsible oversight and auditability

-

Ethical guardrails in autonomous decision systems

The emphasis on oversight comes as AI-generated content and automated tools are increasingly used in intelligence analysis and logistics. Hegseth’s remarks signal that AI policy is no longer limited to innovation funding; it is now embedded in operational readiness planning.

AI Competition Intensifies Across Allied Nations

The AI policy shift is not limited to Washington. Governments in the U.K., Canada, and Australia have all expanded national AI strategies over the past year, focusing on regulatory alignment and security coordination with U.S. agencies.

Anthropic AI has emerged as one of several companies participating in voluntary safety commitments aimed at reducing misuse risks. These include safeguards against malicious code generation, misinformation amplification, and autonomous escalation in defense scenarios.

The broader geopolitical backdrop includes accelerating Chinese AI development and increasing export controls on advanced semiconductor chips. Western officials have stressed that maintaining AI leadership requires balancing innovation speed with guardrails.

CORE and the Future of Military AI Integration

The introduction of CORE reflects a growing recognition that artificial intelligence is no longer experimental inside defense systems. AI tools are already assisting with supply-chain optimization, predictive maintenance, satellite imagery analysis, and large-scale data interpretation.

However, integrating AI into mission-critical operations raises technical and ethical challenges:

| AI Integration Area | Strategic Concern |

|---|---|

| Autonomous systems | Human oversight in lethal decision chains |

| Intelligence analysis | Bias, hallucination risk, and reliability |

| Cyber defense | Vulnerability to adversarial manipulation |

| Logistics automation | System resilience during conflict |

Officials have emphasized that CORE is designed to ensure that AI remains a force multiplier rather than a vulnerability.

Why Anthropic AI Is Central to the Conversation

Anthropic’s focus on “constitutional AI”—a method designed to embed ethical constraints directly into model training—has made it attractive to policymakers seeking controllable systems. Its approach attempts to reduce harmful outputs and align AI responses with predefined safety guidelines.

As AI models grow more capable, scrutiny over governance structures is intensifying. Lawmakers in both parties have signaled that AI oversight could become a major legislative priority heading into 2027, especially if defense applications expand.

For now, the convergence of Anthropic, AI innovation, Pete Hegseth’s defense messaging, and the rollout of CORE illustrates a turning point: artificial intelligence is no longer a peripheral tech-sector story. It is a national security issue, a diplomatic issue, and increasingly a central pillar of how modern governments plan for future conflicts.

The coming months will likely determine whether CORE becomes a formalized defense doctrine or evolves into a broader allied AI security framework spanning the United States and its closest partners.