Anthropic vs the Pentagon: Ai firm in standoff over safeguards after Maduro abduction claim

An escalating dispute between the US government and Anthropic has put the company's ai safeguards under immediate pressure, after reports that its Claude software was used in a US military operation that led to the abduction of Venezuelan President Nicholas Maduro in January this year. The clash matters because it ties Anthropic’s internal safety rules to national defence contracts and classified work at the Pentagon in Washington, DC.

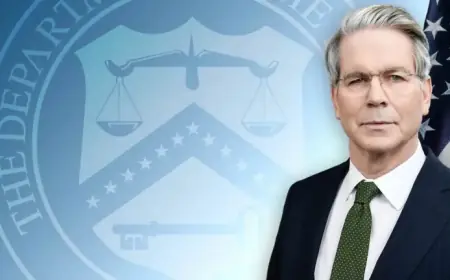

Pete Hegseth sets a Friday deadline and warns of contract loss

U. S. Defense Secretary Pete Hegseth has given Anthropic until Friday to loosen rules on how its tools can be used by the Pentagon, or risk losing the company’s government contract, two news agencies on Tuesday, quoting unnamed sources, said. The demand seeks changes to rules that restrict the department’s use of the company’s technology on classified and other defence projects housed at the Pentagon in Washington, DC.

Claude and the claimed Maduro operation

Anthropic is best known for building Claude, a popular large language model (LLM), and recent reports linked Claude’s software to a US military operation that resulted in the abduction of Venezuelan President Nicholas Maduro in January this year. Anthropic’s role as a developer of tools for defence and civilian uses is central to the dispute over whether its models should face tighter or looser operational limits.

Ai safeguards and Anthropic’s refusal to back down

Anthropic is refusing to loosen safeguards that block its technology from being used for US domestic surveillance and from programming autonomous weapons capable of hitting targets without human intervention. The company positions itself as a "responsible" developer: on its website it describes itself as a "Public Benefit Corporation" committed to the "responsible development and maintenance of advanced AI for the long-term benefit of humanity. "

Security breaches and a high-profile resignation

In November, Anthropic said a Chinese state-sponsored hacking group had manipulated Claude’s code in an attempt to infiltrate about 30 targets globally, naming government agencies, chemical companies, financial institutions and tech giants among those targeted, and acknowledging that some of those attempts were successful. Earlier this month, Mrinank Sharma, an AI safety researcher at Anthropic, resigned. posted on his X account on February 9, Sharma wrote that "The world is in peril" and warned not only about AI and bioweapons but "a whole series of interconnected crises unfolding in this very moment, " adding that he had repeatedly seen pressures inside the organisation to set aside core values.

Defence contracts, classified networks and partners

Last summer the Pentagon announced it was awarding defence contracts to four AI companies: Anthropic, Google, OpenAI and xAI, with each contract worth up to $200m. Anthropic was the first AI developer approved for classified military networks and reportedly works on that infrastructure with partners such as Palantir Technologies, a US software company that has been criticised for its links to the Israeli military.