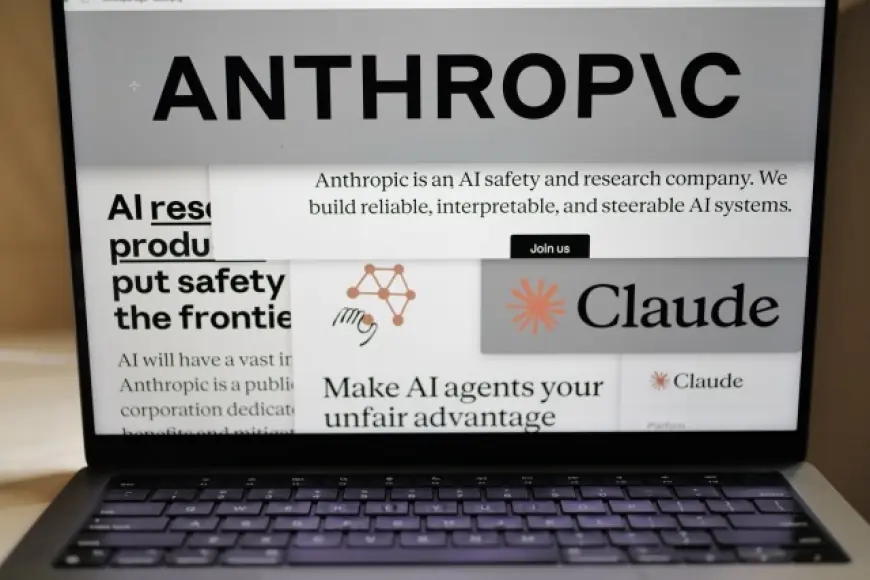

Anthropic AI Faces U.S. Federal Phaseout as Claude AI Guardrails Trigger Showdown

Anthropic is suddenly at the center of a high-stakes clash over how far government users can push frontier AI. In the latest escalation, the U.S. government has moved to halt federal use of Anthropic technology, turning a dispute about safeguards into a broader test of how “core” AI tools should be governed across defense, intelligence, and civilian agencies.

Latest: Anthropic AI Ordered Out of Federal Systems

On Friday, February 27, 2026 (ET), the White House directed U.S. federal agencies to stop using Anthropic AI, setting a six-month window for phaseout in parts of government where the technology has been embedded into workflows. The move follows a breakdown in talks between Anthropic and defense officials over how Claude AI can be used, especially in contexts tied to surveillance and weapons.

The decision has immediate operational implications for federal teams that adopted Claude AI for drafting, summarization, translation, code assistance, and internal knowledge management, as well as for contractors building AI-enabled services that interface with government networks.

| Date (ET) | What happened | Why it matters |

|---|---|---|

| Feb 26, 2026 | Defense leaders signaled a deadline for broader access to Claude AI use | Set the terms for a public confrontation over AI guardrails |

| Feb 27, 2026 | Federal agencies were told to cease using Anthropic technology | Forces replacements, rewrites, and procurement pivots |

| Feb 27, 2026 | Anthropic leadership reiterated limits on certain military uses | Sharpens the debate over responsibility and control |

| Aug 27, 2026 | Six-month phaseout target for affected agencies | A hard clock for migration plans and contract resets |

What Is Anthropic and What Is Anthropic AI?

For readers asking “what is Anthropic” or “what is Anthropic AI,” the company is a U.S.-based AI lab best known for Claude AI, a family of large language models designed for general-purpose tasks and enterprise deployment. The company’s approach emphasizes safety techniques and policy constraints intended to reduce harmful outputs and limit high-risk uses.

“What is Anthropic technology” in practical terms: it is the software stack around Claude AI—models, APIs, and deployment controls—used to generate and analyze text, assist with coding, and automate knowledge work. That same utility is why it can become sensitive in government settings where AI might be asked to support intelligence analysis, targeting, or large-scale monitoring.

Dario Amodei Defends Anthropic Technology Guardrails

Chief executive Dario Amodei has become the public face of the company’s stance that certain uses should remain off-limits, even for powerful customers. Anthropic’s position is that removing key safety controls could enable outcomes that exceed current technical safety limits and strain democratic norms.

This posture is not new for Anthropic; it reflects a broader “AI safety” philosophy that treats advanced models as dual-use technology—useful for productivity, but capable of amplifying harm if integrated into sensitive decision loops. Still, the speed and scale of the federal response marks a rare moment where a leading AI vendor’s red lines collide directly with national security procurement.

Pete Hegseth, Defense Pressure, and the Military Use Debate

Defense Secretary Pete Hegseth has been central to the standoff, pressing for fewer restrictions on how military users can employ Claude AI. The dispute has focused on whether the government should be able to use Anthropic AI in ways that could support mass surveillance or enable autonomous weapons functions without meaningful human control.

This conflict is bigger than a single contract. It raises a foundational question for “core AI” capabilities in government: when an agency licenses a frontier model, does it buy an adaptable tool that can be repurposed for any mission set, or a bounded product whose maker can limit high-risk applications?

Claude AI as Core AI Infrastructure: Supply Risk, Competition, and Knock-On Effects

Claude AI has become a common layer in enterprise AI stacks, and government adoption signaled that Anthropic was moving into the category of “core” AI suppliers. A sudden federal shift away from Anthropic technology can ripple outward in three ways:

-

Migration costs: Agencies and contractors may need to retrain staff, rebuild prompts and workflows, revalidate security controls, and re-audit outputs for compliance.

-

Procurement reshuffling: A phaseout creates an opening for competitors to pitch “safe enough” alternatives that promise faster deployment and fewer policy constraints.

-

Policy precedent: If one frontier vendor can be sidelined over guardrails, other AI providers will face pressure to clarify their own limits for defense and intelligence use cases.

What Comes Next for Anthropic, Anthropic AI, and AI Policy

In the near term, the practical story is operational: how quickly agencies can replace Anthropic AI without breaking critical internal systems, and whether contractors will be barred from using Claude AI in projects that touch federal networks. In the medium term, the political story is sharper: lawmakers and regulators will likely debate whether it is acceptable for private AI labs to enforce usage boundaries—or whether government should dictate terms for any AI considered essential to national security.

For the public, the simplest framing remains the one many readers search for: “what is Anthropic AI” today is no longer just a productivity tool. It is a contested piece of national infrastructure—caught between the promise of automation and the risks of letting AI drift from assistance into authority.