Microsoft Asserts Copilot’s Purpose Beyond Mere Entertainment

Microsoft is set to update its Copilot Terms of Use after public scrutiny regarding its usage declarations. Recently, users discovered that the terms described Copilot as being “for entertainment purposes only,” which contrasts sharply with its marketing as a robust AI tool.

Copilot’s Inconsistent Messaging

A Microsoft spokesperson clarified that this outdated phrasing originated from Copilot’s initial launch as a search companion within Bing. The company acknowledged that this language no longer reflects the tool’s current functionality. Thus, changes to the user agreement are anticipated in the upcoming update.

Social media users raised concerns about the reliance on Copilot. The existing terms state the AI can make errors and should not be trusted for important decisions. This has raised questions about Microsoft’s confidence in its AI offerings.

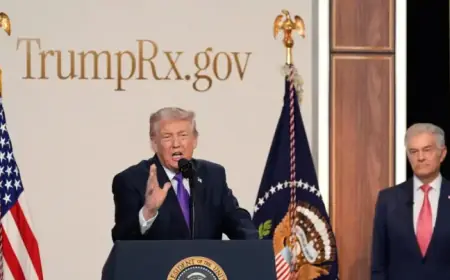

Statements from Leadership

During a January earnings call, CEO Satya Nadella touted Copilot’s capabilities, emphasizing its accuracy and performance powered by an intelligence tool known as Work IQ. This starkly contrasts the implications of the terms which imply limited reliability.

Comparison with Competitors

Microsoft’s agreement isn’t the only legal stipulation for users. It also requires acceptance of Microsoft’s broader Services Agreement, which lacks the contentious “entertainment purposes” language in AI-related sections.

In contrast, other AI companies like OpenAI, Anthropic, and Meta do not include such terminology in their user agreements. These companies utilize similar language designed to limit liability while clarifying the usage of their AI tools.

- OpenAI: Requires users to acknowledge the risks of relying solely on AI outputs.

- xAI: Mandates users indemnify the company from legal claims.

- Meta: Prohibits using AI for professional advice in regulated sectors such as medicine and law.

Legal Challenges Surrounding AI

As generative AI technology matures, legal challenges are escalating. OpenAI currently faces multiple lawsuits, including one alleging its AI model caused harm to users. The complexities of liability in AI output are becoming a prominent legal issue.

Recently, Nippon Insurance Company also filed suit against OpenAI for claims of customer-induced legal challenges attributed to AI advice. OpenAI has urged the public to await further information concerning legal matters related to mental health cases and promises to enhance its models continuously.

As Microsoft navigates these updates, the focus remains on balancing user expectations with the capabilities and responsibilities of advanced AI tools like Copilot.