ICO Seeks Answers From Meta After Report Says Human Reviewers Viewed Intimate Smart‑glasses Footage

The UK data regulator has written to Meta after a probe found that subcontracted workers were at times able to view sensitive material filmed by meta smart glasses. The move signals regulatory scrutiny of how footage captured by wearable AI devices is handled and whether users are given clear control and transparency.

Meta contractors in Nairobi and the Sama connection

The investigation identified data annotators employed by a Nairobi‑based outsourcing firm, Sama, who trained AI by manually labelling images and videos captured by the company’s AI‑enabled Ray‑Ban smart glasses. Workers described seeing a wide range of private material, with one saying, “We see everything - from living rooms to naked bodies. ” Examples cited include footage of glasses wearers using the toilet and intimate encounters.

Meta has acknowledged that subcontracted workers can sometimes review content captured by its glasses in order to improve the user experience and that some automated filters—such as blurring faces—are applied before review. The company also makes clear in its UK AI terms of service that interactions with AIs may be reviewed either automatically or manually. Nevertheless, the regulator has stated that devices processing personal data should put users in control and provide appropriate transparency about what data is collected and how it is used.

As a direct consequence of the findings, the Information Commissioner’s Office (ICO) said the claims are concerning and will be writing to Meta to request information on how the company meets its obligations under UK data protection law. That formal request marks an official step toward probing whether Meta’s practices and disclosures satisfy legal expectations for privacy safeguards and user notice.

Nearby Glasses app and Bluetooth identifier 0x004C

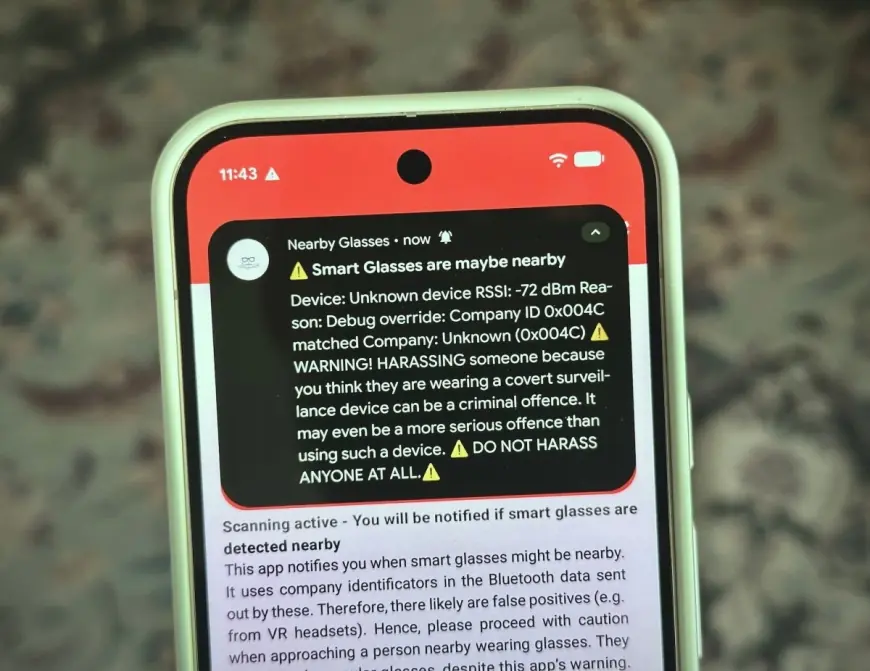

Privacy worries around always‑recording eyewear have prompted technical responses. An Android app called Nearby Glasses was developed to scan for Bluetooth signals emitted by wearable devices and alert users when it detects devices made by manufacturers such as Meta, Snap, and Oakley. The app listens for a publicly assigned Bluetooth identifier unique to a device maker and can also accept user‑added identifiers; the developer demonstrated this by adding identifier 0x004C to look for Apple devices.

In testing, adding 0x004C caused the test phone to flood with alerts, demonstrating the app’s sensitivity to nearby manufacturer identifiers and the likelihood of many notifications in dense environments. The app’s author, Yves Jeanrenaud, cautioned that the tool can produce false positives—for example, confusing a virtual reality headset with a pair of smart glasses from the same manufacturer—which limits its decisiveness but not its practical utility as an early warning system. Jeanrenaud said demand exists for an iPhone version but that development depends on his available time.

The emergence of the app is a clear effect of rising public resistance to devices that can record or process information about people who have not consented. Developers and advocates stress that technical detection tools cannot replace legal or policy change, but they can provide immediate, if imperfect, protection for bystanders who worry about covert recording.

What makes this notable is the convergence of three elements: a regulator initiating formal inquiry, on‑the‑ground testimony from outsourced reviewers about the sensitivity of material seen, and consumer tools designed to detect the devices themselves. Together, these developments have pushed privacy questions about AI‑enabled wearables from abstract debate into concrete regulatory and technological responses.

Meta maintains that it takes data protection seriously and is constantly refining its tools and processes. Users must activate recording manually or by voice command, but the disclosure that human review can occur means the company’s filtering and transparency mechanisms are now a focal point of the ICO’s inquiries and of public scrutiny.

The ICO’s letter to Meta will seek detailed information about how the company explains data collection and use, how filters are applied, and what safeguards are in place for content that may contain intimate or highly personal material. The outcome of that exchange will determine whether further regulatory action follows.