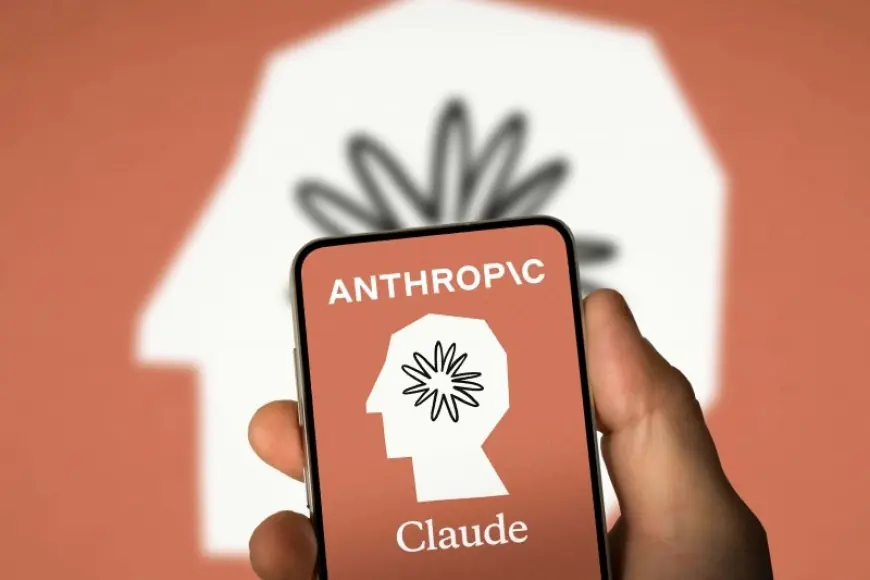

Claude outage sparks elevated error reports, with login failures hitting many users Monday

Claude users were running into a service disruption Monday morning, with widespread reports of “elevated errors” and a pattern that looked less like a full shutdown and more like a front-door jam: people could not reliably sign in, load chats, or complete requests, even as some developer integrations appeared to keep working. The first public incident note appeared at 6:49 a.m. ET on March 2, 2026, and the early troubleshooting guidance from engineers watching the symptoms was simple: expect intermittent failures until authentication and session handling stabilize.

For anyone asking “is Claude down,” the most accurate answer for much of the morning was: parts of Claude were down for a meaningful share of users, especially the consumer-facing experience that depends on logging in and keeping a session alive. Some users could still get responses; others were blocked at the gate.

Is Claude down right now? Why “partial outage” felt like a total break

Outages like this tend to split the world into two groups: those who can get in and those who can’t, often at the same moment. That’s because the core model service and the systems around it don’t fail in the same way. When the model layer is the problem, nearly everyone sees consistent failures. When the access layer is the problem—identity, session tokens, routing, or rate limiting—results can vary wildly depending on region, timing, and how your account is authenticated.

Monday’s disruption had the fingerprints of that second category. Users described sudden spikes in timeouts, repeated “try again” behavior, and sessions that stalled mid-request. Others reported being unable to log in at all, or being kicked out and then stuck. The practical effect is that normal self-help rituals—refreshing the page, restarting the app, switching networks—sometimes worked and sometimes made no difference, because the bottleneck wasn’t on the user’s device. It was upstream, where traffic and authentication converge.

That variability also amplifies frustration. If your coworker can access Claude while you can’t, it’s tempting to assume the issue is personal. In reality, an overloaded authentication or session layer can fail probabilistically: a fraction of token refreshes error out, retries pile up, and the retry storm becomes part of the outage. A small fault can suddenly look enormous.

Claude down reports surge as login and session systems become the choke point

The clearest signal from the incident notes was the emphasis on login and logout paths. That matters because authentication failures don’t just block new users; they also destabilize existing sessions. If a session refresh fails, a user who was working normally can be dropped into an error loop. When enough people experience that at once, traffic patterns shift: fewer successful requests reach the model, while more clients hammer the identity layer trying to recover.

This is also where “partial outage” can be deceptively severe. A platform can be technically online, but if enough users can’t authenticate, the service is effectively unavailable to them. And because modern AI products are used in bursts—people tend to log in at the start of a work block—pressure often arrives in waves. If the system recovers briefly, a backlog of users immediately tries again, which can trigger another dip.

There’s a second-order consequence too: when the web experience is unreliable, users who have alternative access routes—developer tools, enterprise setups, automated workflows—may continue working, while everyone else is locked out. That unevenness can distort perceptions of the outage, but it also provides a clue: if programmatic access remains steadier than the consumer app, the failure domain is likely around identity, sessions, or the web-facing request pipeline rather than the model compute itself.

What to do during a Claude outage, and the signals that matter next

If you’re stuck mid-task, the best move is to treat this as a platform availability event, not a local glitch. Repeated rapid refreshes can backfire when systems are already saturated. Instead, space out retries. If you can reach the chat interface but requests fail intermittently, keep prompts shorter and avoid multi-step runs that depend on session continuity. If you’re blocked at sign-in, the problem is unlikely to be fixed by device changes alone.

The next steps now hinge on how the service team resolves the underlying constraint. There are a handful of common recovery paths, and the differences matter for users planning their day.

One scenario is a rapid stabilization after a rollback or configuration change. In that case, error rates fall quickly and logins start succeeding in a broad, noticeable way. A second scenario is partial recovery with lingering pockets of failure: the main interface returns, but specific authentication flows—certain devices, single sign-on variants, or session refresh—remain shaky until caches and token stores settle. A third scenario is recurrence under load: things look better, then degrade again when a new wave of users tries to log in at once. A fourth scenario is a longer, maintenance-like fix where the team has identified a deeper issue and pushes incremental changes to avoid locking more users out.

What’s still unknown publicly is the root cause—whether this was a recent deployment that misbehaved, a dependency failure in identity infrastructure, or a demand surge that overwhelmed a critical component. But the mechanism implied by the symptoms is consistent: when login and session systems buckle, everything downstream feels unreliable.

For teams relying on Claude in production workflows, the practical takeaway is operational: build in graceful failure. Save critical prompts and outputs locally rather than assuming a session will persist. Avoid scheduling high-stakes deliverables around a single external service. And when the platform returns, expect a short period of unevenness as traffic normalizes—because the first thing everyone does after an outage is try again at the same time.

Monday’s disruption underscored a simple reality of the AI boom: the models may be powerful, but the user experience depends just as much on the unglamorous layers—identity, sessions, and routing—that determine whether a question ever reaches the model in the first place.