Comprehensive AI Glossary: Navigating LLMs to Hallucinations

Artificial intelligence encompasses a complex array of concepts, technical terms, and evolving methodologies. As the field progresses, so do the definitions and implications of various terms used within it. This glossary serves as a comprehensive resource for understanding critical terms related to AI, particularly focusing on large language models (LLMs) and the concept of AI hallucinations. This resource will be updated regularly to reflect advancements in the industry.

Key Terms in Artificial Intelligence

- Artificial General Intelligence (AGI): Generally refers to AI that can perform most tasks better than humans. It has varying definitions across organizations, such as OpenAI and Google DeepMind.

- AI Agent: A sophisticated AI tool capable of executing complex tasks, like booking reservations or coding.

- Chain-of-Thought Reasoning: This involves breaking down complex problems into smaller steps for improved accuracy, especially in logic or coding tasks.

- Compute: Refers to the computational power required to train and deploy AI models, typically provided by hardware like GPUs and TPUs.

- Deep Learning: A subset of machine learning that employs artificial neural networks to recognize patterns without human input, requiring vast amounts of data.

- Diffusion: A technique in generative AI that learns to reconstruct data by first ‘destroying’ it through noise.

- Distillation: This technique extracts knowledge from larger AI models, enabling the creation of smaller and more efficient models.

- Fine-Tuning: Adjusting an AI model’s performance by introducing specialized data for specific tasks.

- Generative Adversarial Networks (GANs): A framework involving two neural networks that compete against each other to produce realistic data.

- Hallucination: This term describes instances when AI produces incorrect information, posing risks in various applications.

- Inference: The process of making predictions using an AI model based on previously seen data.

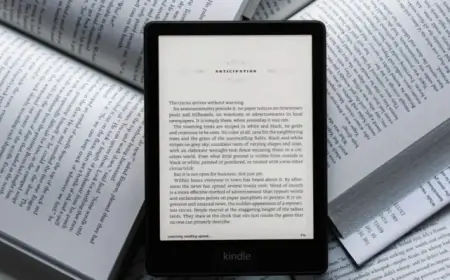

- Large Language Models (LLMs): These models form the backbone of AI assistants, processing and generating human-like text based on extensive training data.

- Memory Cache: An optimization technique that enhances the efficiency of AI inference by saving past calculations.

- Neural Network: An architecture mimicking the human brain’s interconnected neurons, essential for deep learning applications.

- RAMageddon: A term reflecting the growing shortage of random access memory, leading to price increases across various tech sectors.

- Training: The process of feeding data into AI models so they can learn patterns and generate outputs.

- Tokens: The discrete units of data used in human-AI communication, created through tokenization to allow LLMs to understand and respond to queries.

- Transfer Learning: A method where knowledge from one model is applied to another model, facilitating quicker development.

- Weights: Numerical parameters that define the importance of different features in training datasets, influencing model outputs.

Understanding AI Hallucinations

AI hallucinations refer to the creation of false information by AI models. This phenomenon can lead to misleading outputs and significant risks, particularly in critical areas like healthcare. The issue often results from gaps in training data, especially in general-purpose models. Ongoing advancements aim to develop more specialized AI systems to mitigate these risks and improve data accuracy.

The Future of AI

The landscape of AI continues to evolve as researchers discover new methodologies and potential risks. With an increasing reliance on complex systems like LLMs and the push for specialized AI models, understanding these terms is crucial. Keeping abreast of these developments will help both professionals and users navigate the intricate world of artificial intelligence more effectively.