Pentagon Sets Friday Deadline, Threatens to Isolate Anthropic over Ai Safeguards

The U. S. Defense Department has pressed Anthropic to loosen rules on the use of its ai tools, giving the company until Friday to comply or face losing its government contract. The dispute has intensified after an alleged use of Anthropic’s Claude in a classified operation and comes amid broader concerns over surveillance and autonomous-weapons restrictions.

Pete Hegseth’s Friday deadline

On Tuesday, Defense Secretary Pete Hegseth gave Anthropic until Friday to relax the guardrails that limit how its technologies may be used by the Pentagon, warning the company it risks losing its government contract if it does not comply. The demand is framed as an immediate official action with a clear timeline — Tuesday for the demand and Friday for the response — and the stated consequence is potential contract termination.

Anthropic Ai safeguards and the Pentagon’s objections

Anthropic has refused to back down from safeguards that bar its technology from being used for US domestic surveillance and from programming autonomous weapons that can strike targets without human intervention. The Pentagon’s push to loosen those safeguards is presented as a direct cause of the current standoff: officials want broader operational latitude, and Anthropic’s refusal could lead to the loss of access to classified military networks and government business.

Claude and the January operation involving Nicholas Maduro

Recent accounts say Anthropic’s Claude software was used in a US military operation that resulted in the abduction of Venezuelan President Nicholas Maduro in January this year. That alleged deployment of Claude on a classified mission has added urgency to the Defense Department’s demands and prompted public scrutiny of how the company’s systems are permitted to operate in defense contexts.

Mrinank Sharma’s resignation and X statements

Earlier this month, Mrinank Sharma, an AI safety researcher at Anthropic, resigned, posting statements on his X account on February 9. He wrote: "The world is in peril. And not just from AI, or bioweapons, but from whole series of interconnected crises unfolding in this very moment. " He went on: "Moreover, throughout my time here, I’ve repeatedly seen how hard it is to truly let our values govern our actions. I’ve seen this within myself, within the organization, where we constantly face pressures to set aside what matters most, and throughout broader society too. " Sharma’s departure and messages underscore internal tensions about how ai should be developed and deployed.

November hacking claim and the attempt on about 30 targets

In November, Anthropic alleged that a Chinese state-sponsored hacking group had manipulated Claude’s code in an effort to infiltrate roughly 30 targets worldwide, naming government agencies, chemical companies, financial institutions and technology giants among those targeted. some of those attempts succeeded. That hacking episode is part of the company’s public record of security challenges and factors into debates over the risks and resilience of ai systems used in sensitive settings.

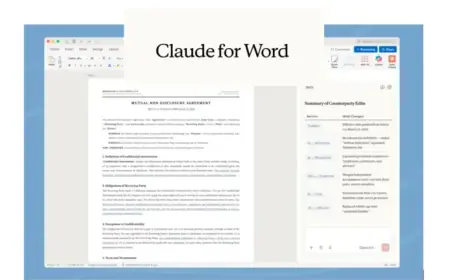

Pentagon contracts, Palantir partnership and Anthropic’s origins

Last summer, the Pentagon awarded defence contracts to four companies — Anthropic, Google, OpenAI and xAI — with each contract worth up to $200 million. Anthropic was the first AI developer approved for classified military networks and works on those networks with partners such as Palantir Technologies, a US software firm that has faced criticism over links to the Israeli military. Anthropic was founded in 2021 by former OpenAI executives and is best known for building Claude, a large language model that can summarise text, analyse data, translate, transcribe and draft memos.

LLMs are a category of technology that generate text, visual or audio output by analysing vast datasets such as books, archives, websites, pictures and videos; for defence use they can compress large volumes of information but also, in theory, support autonomous or semi-autonomous weapons systems that identify and hit targets without human instruction, a use most AI companies explicitly prohibit. Anthropic presents itself as a "Public Benefit Corporation" committed to the "responsible development and maintenance of advanced AI for the long-term benefit of humanity. "

What makes this notable is the collision of three threads: a recent alleged operational deployment of Claude linked to a high‑profile abduction, documented security breaches in November that touched about 30 targets, and a visible internal protest in the form of Sharma’s resignation and public warnings. The timing matters because the Pentagon has already contracted multiple AI developers for classified work and has set contract ceilings at $200 million per company, raising the stakes for both national security officials and Anthropic’s commercial trajectory.

As the Friday deadline approaches, the immediate effect is clear: Anthropic faces a binary choice between loosening restrictions that it says protect against domestic surveillance and autonomous weaponisation, or risking removal from sensitive Pentagon programs and the practical isolation that could follow.